August 27, 2024

Mohenjo

Business, Food For Thought, Human Interest, Political, Science, Technical

amazon, business, Business News, current-events, Future, Hotels, human-rights, medicine, mental-health, research, Science, Science News, technology, Technology News, travel, vacation

Click the link below the picture

.

I’m a full-time freelancer, which means I spend my days writing articles from my house. But once upon a time, I commuted to an office every day where I was bombarded with meetings, assignments, Slack channels, and project check-ins.

I like to give each task my full undivided attention, so when something ripped my focus away—like a Slack DM or a coworker walking by—I felt like I got major attention whiplash. I’d lose my flow, and it’d take me a few minutes to get back in it. For a long time, I felt like something was wrong with me because I couldn’t flip between tasks like some of my coworkers, who seemed gifted at doing multiple things at once. But I’ve since learned I’m not a freak (at least not in this way) and that human brains aren’t built for multitasking.

In fact, your brain can only really handle one thing at a time, so when you go through your inbox during a team meeting, you’re not really effectively doing both of these things at the same time. Instead, “your attention is switching—and if your attention is on email then you’re not paying attention to the Zoom meeting,” says Gloria Mark, PhD, Chancellor’s Professor of Informatics at UC Irvine and author of Attention Span and the Substack The Future of Attention. As a result, you’re not accomplishing as much as you think you are (and, most likely, even less than you could be if you were zeroed in on one thing).

So if you feel like you need to do it all, all the time, you might want to rethink your approach. Here’s why multitasking won’t actually help you get ahead.

First, what even is multitasking?

It’s not like doing two things at once is always a recipe for disaster. In fact, people are actually really good at it when one or more of those things is automatic (think: walking and texting at the same time), Dr. Mark says.

But when one of your tasks requires you to think? That’s where so-called multitasking can go south (fast). Your brain can only pay attention to one thing—that requires any kind of mental effort—at a time. So, even if it seems like you’re making progress by juggling a few to-dos, you’re kind of half-assing multiple tasks at once.

Take the case above of emailing during a Zoom call. Dr. Mark says you’re either listening to what your manager is saying or you’re all in on crafting that email. Sure, you might hear a keyword—like your name—but you won’t really be able to digest what’s being said. In this sense, “multitasking really means switching your attention between things,” Dr. Mark says.

Here’s why multitasking doesn’t work—and can actually work against you

Not only is your brain incapable of completing congruous mental tasks, but attempting to do so is terrible for your performance and well-being.

People make more mistakes when they try to do multiple things at once. “There’ve been decades of laboratory studies that show when people are multitasking—again, they’re switching their attention between different tasks—they make more errors,” Dr. Mark says. One study, for example, found that physicians were more likely to write an incorrect prescription when they did two things at once, like typing on a computer while answering a patient’s question. (Making a mental note to force my doctor to 100% focus on me during appointments).

The consequences can get pretty dire: If you’re driving and talking on the phone, even if it’s hands-free, you’re not fully dialed into what’s happening around you. As a result, you might not see a car drift into your lane as quickly as you would if the road had your full focus, says Anthony Wagner, PhD, deputy director of the Stanford Wu Tsai Neurosciences Institute.

.

Asiya Hotaman/Getty Images

Asiya Hotaman/Getty Images

.

.

Click the link below for the article:

.

__________________________________________

August 26, 2024

Mohenjo

Business, Food For Thought, Human Interest, Political, Science, Technical

amazon, business, Business News, current-events, Future, Hotels, human-rights, medicine, mental-health, research, Science, Science News, technology, Technology News, travel, vacation

Click the link below the picture

.

When it came time for Itzy Morales Pantoja to start her Ph.D. in cellular and molecular medicine, she chose a laboratory that used stem cells—not only animals—for its research. Morales Pantoja had just spent two years studying multiple sclerosis in mouse models. As an undergraduate, she’d been responsible for giving the animals painful injections to induce the disease and then observing as they lost their ability to move. She did her best to treat the mice gently, but she knew they were suffering. “As soon as I got close to them, they’d start peeing—a sign of stress,” she says. “They knew what was coming.”

Even though the mouse work was emotionally “very, very difficult,” Morales Pantoja remained committed to her research out of a desire to help her sister, who has multiple sclerosis. Three years after the project wrapped up, however, Morales Pantoja was crushed to find that none of her results would be of any direct help to people like her sister. An antioxidant she’d tested seemed promising in mice, but in human samples it was ineffective.

This was a disappointment but not a surprise. Around 90 percent of novel drugs that work in animal models fail in human clinical trials—an attrition rate that contributes to a $2.3-billion average price tag for every new drug that comes to market.

Today Morales Pantoja is a postdoctoral fellow at the Johns Hopkins Center for Alternatives to Animal Testing, where she is helping to develop lab-grown models of the human brain. The goal is to advance scientific understanding of neurodegeneration while moving the field beyond what some researchers see as an antiquated reliance on animal models.

Millions of rodents, dogs, monkeys, rabbits, birds, cats, fish, and other animals are used every year for research purposes worldwide. Exact numbers are hard to come by, but advocacy group Cruelty Free International estimated that 192 million animals were used in 2015. Most of this work occurs in four broad domains: cosmetics and personal products, chemical toxicity testing, drug development, and drug-discovery research.

Animal-based studies have contributed to important findings and lifesaving medical advancements. The COVID vaccines, for instance, were developed in animals, including mice and nonhuman primates. Animal models have also been critical in advancing AIDS drugs and in developing treatments for leukemia and other cancers, among many other uses.

But animal studies often fall short of producing useful results. They may weed out possibly effective drugs or miss toxicity in humans. They have failed to deliver breakthroughs in certain fields of medicine, including neurological conditions. A 2014 study estimated that candidate therapies for Alzheimer’s disease developed in animal models have failed in clinical trials about 99.6 percent of the time. “As questions about human biology and variability get more complex, we are bumping up against the limits of animal models,” says Paul Locke, an environmental health scientist and attorney at the Johns Hopkins Bloomberg School of Public Health. “The thing you run into with animals—and there’s no way to get around this—is that animal biology is just too different from human biology.” Other species are no longer providing the insights about human biology—including at the cellular and subcellular levels—that scientists today need to achieve innovation.

A growing, multidisciplinary community of researchers around the world is investigating alternatives to animal models. Some are motivated by concerns about animal welfare, but for many, sparing the lives of millions of creatures is just an added bonus. They are driven primarily to create technologies and methods that will approximate human biology and variability better than animals do.

.

Millions of animals are used for research purposes every year, but their efficacy is increasingly limited. Henrik Sorensen/Getty Images

.

.

Click the link below for the article:

.

__________________________________________

August 26, 2024

Mohenjo

Business, Food For Thought, Human Interest, Political, Science, Technical

amazon, business, Business News, current-events, Future, Hotels, human-rights, medicine, mental-health, research, Science, Science News, technology, Technology News, travel, vacation

Click the link below the picture

.

When people think of remote jobs, rarely do they think they’ll be making a substantial salary. However, there are several industries that offer fully remote, in-demand jobs that have salaries of more than $100,000.

If you’re looking to land a six-figure job or are asking yourself what jobs are in demand, look no further. FlexJobs analyzed its job database from February 1, 2024, through July 30, 2024, to find the most in-demand, fully remote jobs that offer high salaries. The list below features jobs that offer $100,000-plus annual salaries, according to Payscale.

Fully Remote Jobs With $100K+ Salaries

Are you looking to make a high salary, but not sure where to start? These in-demand jobs are a great launching point for your job search.

1. Senior Customer Success Manager

Median salary: $101,184

Senior customer success managers oversee the relationships between a brand and its clients, ensuring satisfaction, retention, and growth. These professionals work closely with sales and support teams to provide strategic solutions, address client needs, and help clients meet their goals with the company’s products or services.

2. Account Director

Median salary: $104,053

Account directors develop and execute strategies to meet clients’ business objectives, ensuring client satisfaction while driving revenue growth. This role often involves coordinating internal teams to manage key client accounts and deliver high-quality services.

.

Source Photos: Artem Podrez/Pexels and Mackenzie Marco/Unsplash]

Source Photos: Artem Podrez/Pexels and Mackenzie Marco/Unsplash]

.

.

Click the link below for the article:

.

__________________________________________

August 25, 2024

Mohenjo

Business, Food For Thought, Human Interest, Political, Science, Technical

amazon, business, Business News, current-events, Future, Hotels, human-rights, medicine, mental-health, research, Science, Science News, technology, Technology News, travel, vacation

Click the link below the picture

.

When doctors ask Sara Gehrig to describe her pain, she often says it is indescribable. Stabbing, burning, aching—those words frequently fail to depict sensations that have persisted for so long they are now a part of her, like her bones and skin. “My pain is like an extra limb that comes along with me every day.”

Gehrig, a former yoga instructor and personal trainer who lives in Wisconsin, is 44 years old. At the age of 17, she discovered she had spinal stenosis, a narrowing of the spinal cord that puts pressure on the nerves there. She experienced bursts of excruciating pain in her back and buttocks and running down her legs. That pain has spread over the years, despite attempts to fend it off with physical therapy, anti-inflammatory injections, and multiple surgeries. Over-the-counter medications such as ibuprofen (Advil) provide little relief. And she is allergic to the most potent painkillers—prescription opioids—which can induce violent vomiting.

Today her agony typically hovers at a 7 out of 10 on the standard numerical scale used to rate pain, where 0 is no pain and 10 is the most severe imaginable. Occasionally her pain flares to a 9 or 10. At one point, before her doctor convinced her to take antidepressants, Gehrig struggled with thoughts of suicide. “For many with chronic pain, it’s always in their back pocket,” she says. “It’s not that we want to die. We want the pain to go away.”

Gehrig says she would be willing to try another type of painkiller, but only if she knew it was safe. She keeps up with the latest research, so she was interested to hear earlier this year that Vertex Pharmaceuticals was testing a new drug that works differently than opioids and other pain medications.

That drug, a pill called VX-548, blocks pain signals before they can reach the brain. It gums up sodium channels in peripheral nerve cells, and obstructed channels make it hard for those cells to transmit pain sensations. Because the drug acts only on the peripheral nerves, it does not carry the potential for addiction associated with opioids—oxycodone (OxyContin) and similar drugs exert their effects on the brain and spinal cord and thus can trigger the brain’s reward centers and an addiction cycle.

In January Vertex announced promising results of clinical trials of VX-548, which it is calling suzetrigine, showing that it dampened acute pain levels by about one half on that 0-to-10 scale. The company is applying for U.S. Food and Drug Administration approval for the drug this year.

Other pain drugs that target sodium channels are now being developed, some by firms motivated by Vertex’s success. Navega Therapeutics, led by biomedical engineer Ana Moreno, is even using molecular-editing tools such as CRISPR to suppress genes involved in chronic pain. “We are definitely hopeful that we can replace opioids, and that’s the goal here,” she says.

One in five U.S. adults—51.6 million people as of 2021—is living with chronic pain. New cases arise more often than other common conditions, such as diabetes, depression, and high blood pressure. Yet pain treatments have not kept pace with the need. There are over-the-counter pills such as aspirin, acetaminophen (Tylenol), and nonsteroidal anti-inflammatories (NSAIDs) such as Advil. And there are opioids. The glaring inadequacy of existing medications to alleviate human suffering has fueled the ongoing opioid epidemic, which has led to more than 730,000 overdose deaths since its start.

. Samantha Mash

Samantha Mash

.

.

Click the link below for the article:

.

__________________________________________

August 25, 2024

Mohenjo

Business, Food For Thought, Human Interest, Political, Science, Technical

amazon, business, Business News, current-events, Future, Hotels, human-rights, medicine, mental-health, research, Science, Science News, technology, Technology News, travel, vacation

Click the link below the picture

.

Dear Prudence,

I’m a teacher, and I spend a lot of time with my co-workers. Everyone is very family-oriented and loves kids, so usually when people get married, it’s not uncommon for a teacher to ask something along the lines of: When are you going to have a baby? I very naively thought that it would be easy for us to get pregnant since we have no health issues. Therefore, I told a few work friends that we were starting the process of trying, and when they would ask how it was going, even though I was starting to get frustrated, I would still make some jokes like, “Winter break is coming—maybe we’ll get a little Christmas surprise!”

Unfortunately, now it’s been almost two years with no results. We have started to go to fertility clinics and recently found out that my husband’s sperm production is the cause of our infertility. I choose not to share this medical information with my coworkers because it’s so personal. However, I am still getting barraged by teachers giving me all this advice about what I can do to prepare my body for pregnancy. This is even more difficult to hear since I know that it’s not my body that is the problem, but I would never tell them that. They still come up to me all the time to ask me if I’m pregnant and whether I have any news.

I’ve personally been struggling with some depression due to this, so I usually just put on a happy face and say, “No news yet. I’ll make an announcement when there’s some news to be shared.” What I really want to say is, “Will you please stop asking about my reproductive health?” But that’s very rude, especially since I’m the one who opened the door to this side of my life. How do I firmly yet politely say to some of the more well-wishing teachers that this is a topic that I just do not wish to discuss anymore?

—No Baby News Yet

Dear No Baby News,

Your fellow teachers have apparently not gotten the memo yet that it’s 2024 and constantly asking questions about whether someone else is or wants to be pregnant is totally intrusive! But let’s deal with your reality: You may have opened the door for their comments by mentioning you are trying to get pregnant. Still, that doesn’t give them the right to follow up constantly. So many people struggle with infertility—even Democratic vice presidential nominee Tim Walz and his wife have talked openly about their difficulties!—it’s really time to get with the program. You don’t want to be rude, but I think you just have to say, maybe when you are in the teachers’ lounge with a group or at lunch: “I know I mentioned that we were trying for a baby, but we’re taking a break, so it would be great if you didn’t bring it up, as it’s sensitive right now.” That’s not rude. It’s taking care of yourself and your mental health.

That said, I am also concerned about how you’re casting blame for your infertility. Why was it important for Prudie to know that the issue is on your husband’s side? You say it’s “not your body that’s the problem.” Ouch. Imagine if the primary struggle with getting pregnant was on you, and that’s how your husband described it. It takes two (or at least a sperm and an egg) to get pregnant; your infertility is a shared struggle. I suggest you start thinking that way instead of blaming your partner for something outside of his control.

.

.

.

Click the link below for the article:

.

__________________________________________

August 24, 2024

Mohenjo

Business, Food For Thought, Human Interest, Political, Science, Technical

amazon, business, Business News, current-events, Future, Hotels, human-rights, medicine, mental-health, research, Science, Science News, technology, Technology News, travel, vacation

Click the link below the picture

.

Most of the matter in our universe is invisible. We can measure the gravitational pull of this “dark matter” on the orbits of stars and galaxies. We can see the way it bends light around itself and can detect its effect on the light left over from the primordial plasma of the hot big bang. We have measured these signals with exquisite precision. We have every reason to believe dark matter is everywhere. Yet we still don’t know what it is.

We have been trying to detect dark matter in experiments for decades now, to no avail. Maybe our first detection is just around the corner. But the long wait has prompted some dark matter hunters to wonder whether we’re looking in the wrong place or in the wrong way. Many experimental efforts have focused on a relatively small number of possible identities for dark matter—those that seem likely to simultaneously solve other problems in physics. Still, there’s no guarantee that these other puzzles and the dark matter quandary are related. Increasingly, physicists acknowledge that we may have to search for a wider range of possible explanations. The scope of the problem is both intimidating and exhilarating.

At the same time, we are starting to grapple with the sobering idea that we may never nail down the nature of dark matter at all. In the early days of dark matter hunting, this notion seemed absurd. We had lots of good theories and plenty of experimental options for testing them. But the easy roads have mostly been traveled, and dark matter has proved more mysterious than we ever imagined. It’s entirely possible that dark matter behaves in a way that current experiments aren’t well-suited to detect—or even that it ignores regular matter completely. If it doesn’t interact with standard atoms through any mechanism besides gravity, it will be almost impossible to detect it in a laboratory. In that case, we can still hope to learn about dark matter by mapping its presence throughout the universe. But there is a chance that dark matter will prove so elusive we may never understand its true nature.

On a warm summer evening in August 2022, we huddled with a few other physicists around a table at the University of Washington. We were there to discuss the culmination of the “Snowmass Process,” a year-long study that the U.S. particle physics community undertakes every decade or so to agree on priorities for future research. We were tasked with summing up the progress and potential of dark matter searches. The job of communicating just how many possibilities there are for explaining dark matter, and the many ideas that exist to explore them, felt daunting.

We are at a special moment in the quest for dark matter. Since the 1990s thousands of investigators have searched exhaustively for particles that might constitute dark matter. By now they’ve eliminated many of the simplest, easiest possibilities. Nevertheless, most physicists are convinced dark matter is out there and represents some distinct form of matter.

A universe without dark matter would require striking modifications to the laws of gravity as we currently understand them, which are based on Einstein’s general theory of relativity. Updating the theory in a way that avoids the need for dark matter—either by adjusting the equations of general relativity while keeping the same underlying framework or by introducing some new paradigm that replaces general relativity altogether—seems exceptionally difficult.

The changes would have to mimic the effects of dark matter in astrophysical systems ranging from giant clusters of galaxies to the Milky Way’s smallest satellite galaxies. In other words, they would need to apply across an enormous range of scales in distance and time, without contradicting the host of other precise measurements we’ve gathered about how gravity works. The modifications would also need to explain why, if dark matter is just a modification to gravity—which is universally associated with all matter—not all galaxies and clusters appear to contain dark matter. Moreover, the most sophisticated attempts to formulate self-consistent theories of modified gravity to explain away dark matter end up invoking a type of dark matter anyway, to match the ripples we observe in the cosmic microwave background, leftover light from the big bang.

.

Olena Shmahalo

.

.

Click the link below for the article:

.

__________________________________________

August 24, 2024

Mohenjo

Business, Food For Thought, Human Interest, Political, Science, Technical

amazon, business, Business News, current-events, Future, Hotels, human-rights, medicine, mental-health, research, Science, Science News, technology, Technology News, travel, vacation

Click the link below the picture

.

Many of us have some aspect of our personalities we wish we could change. Maybe you’d love to be a bit more outgoing, daring, resilient, or hard-working. But it can feel as if shifting something as fundamental as your personality is mission impossible.

Aren’t we stuck with the traits we’re born with?

Actually no, says science. Of course, genetics plays some role in personality. As anyone who has dealt with two or more toddlers can tell you, some of us are born shyer or chattier than others, and these tendencies follow us to some extent throughout our lives.

But when one 64-year-long study examined personality tests for the same individuals over decades, it found basically no relationship between people’s results in their teens and their later years. You are a totally different person at 72 than you were at 14.

Which invites the question, if personality shifts over time, can you consciously control the process? Can you speed it up? Can you direct it? The answer according to both fascinating personal experience and research appears to be yes.

From teenage slacker to successful achiever

We’ll start with a personal story from Shannon Sauer-Zavala, a University of Kentucky psychology professor. In a recent Psychology Today post, she explained she definitely wasn’t voted most likely to succeed in high school. In fact she was a shy, messy wallflower who skipped so many math classes she needed to repeat algebra and was repeatedly told by teachers she “wasn’t living up to her potential.”

Now she has a PhD, a TEDx Talk, and a successful career under her belt, and she regularly puts herself out there as living proof that personality change is possible.

“I love telling people about my own personality change story as a way to bust the myth that traits are set in stone,” she writes. So how did she do it?

Sauer-Zavala seems to have been lucky enough not to be carrying any extreme trauma or a difficult diagnosis. She was just a run-of-the mill, low-motivation high school kid, which allowed her to mold her personality with little more than curiosity, passion, and a series of small, doable behavior shifts.

First, she stumbled on psychology in college and discovered something she truly was interested in.

“I was not a strong student in high school and that definitely carried over into college. In my freshman year, unclear on where to focus my studies, I took an Introduction to Psychology course that caught my eye; despite the 8 am start time, I managed to get myself out of bed to attend class. I was rewarded by performing very well on the first exam and the teaching assistant encouraged me to ‘seriously consider majoring in Psychology,'” Sauer-Zavala reports.

.

Photo: Getty Images

Photo: Getty Images

.

.

Click the link below for the article:

.

__________________________________________

August 23, 2024

Mohenjo

Business, Food For Thought, Human Interest, Political, Science, Technical

amazon, business, Business News, current-events, Future, Hotels, human-rights, medicine, mental-health, research, Science, Science News, technology, Technology News, travel, vacation

Click the link below the picture

.

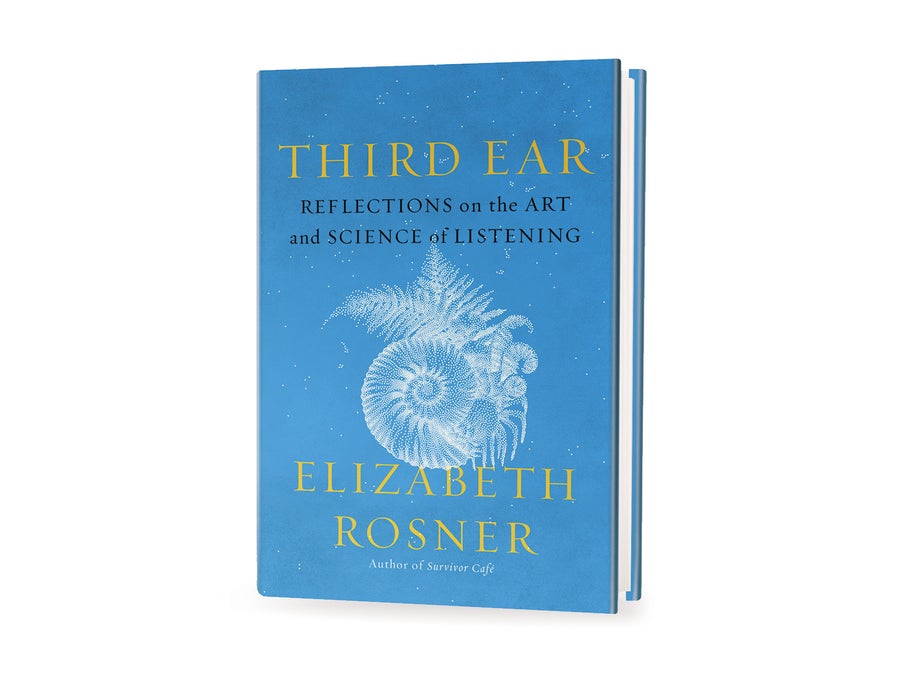

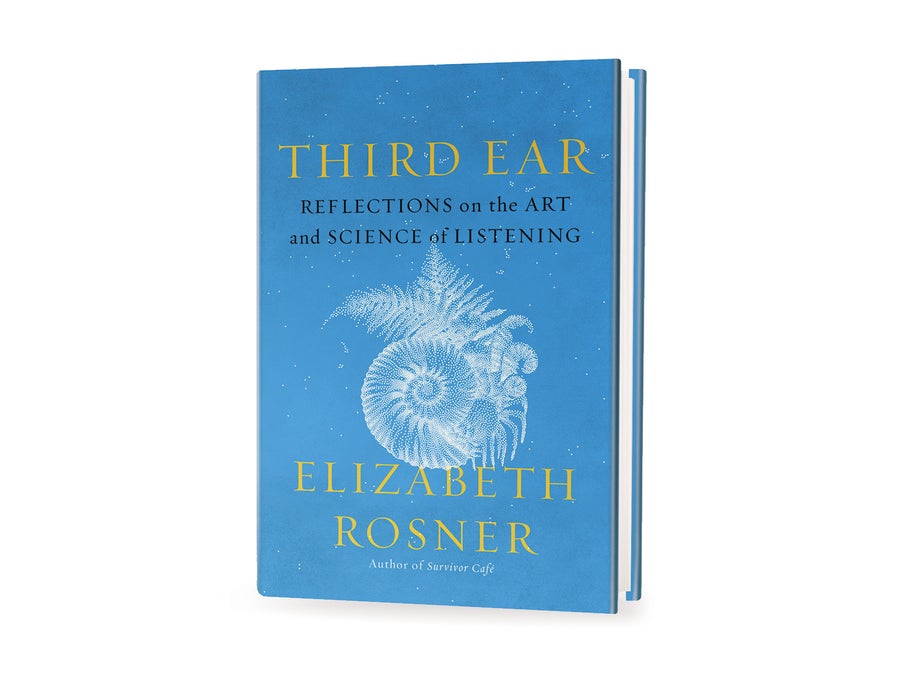

As a child of Holocaust survivors, growing up in a multilingual immigrant household, author Elizabeth Rosner became a careful listener of both the spoken and the unspoken. Her expansive, fluid meditation on so-called third-ear listening—a deeply attuned, intuitive way of perceiving the world that transcends the physically audible—is rooted in personal experience, but the contemplative vignettes explore our sonic universe. Drawing together topics ranging from the rise of podcasting to the vibration-detection sensitivity of an elephant’s foot, this poignant exploration of the hidden depths of the soundscapes around us reveals the importance of listening with more than just our ears.

Book Description

This illuminating book weaves personal stories of a multilingual upbringing with the latest scientific breakthroughs in interspecies communication to show how the skill of deep listening enhances our curiosity and empathy toward the world around us

Third Ear braids together personal narrative with scholarly inquiry to examine the power of listening to build interpersonal empathy and social transformation. A daughter of Holocaust survivors, Rosner shares stories from growing up in a home where six languages were spoken to interrogate how psychotherapy, neurolinguistics, and creativity can illuminate the complex ways we are impacted by the sounds and silences of others.

Drawing on expertise from journalists, podcasters, performers, translators, acoustic biologists, spiritual leaders, composers, and educators, this hybrid text moves fluidly along a spectrum from molecular to global to reveal how third-ear listening can be a collective means for increased understanding and connection to the natural world.

ELIZABETH ROSNER is a bestselling novelist, poet, and essayist. Her works include Survivor Café: The Legacy of Trauma and the Labyrinth of Memory, a finalist for the National Jewish Book Award, and the novel Electric City, named a best book by NPR. Rosner’s essays have appeared in The New York Times Magazine, Elle, and numerous anthologies. She lives in Berkeley, California.

Praise For This Book

Literary Hub, A Most Anticipated Book of the Year

“To masterfully blend memoir with science writing is to create one of the most compelling kinds of book—one whose insights are both cerebral and emotional.” —Jessie Gaynor, Literary Hub

“Deeply sourced, devotedly researched, and refreshingly candid, Rosner’s searing observations on the various ways this crazy world can be navigated, appreciated, and understood open new avenues for thought and exploration.” —Booklist (starred review)

“A book packed with perceptions and revelations. Science and art meet in this eloquent study of the aural world around us.” —Kirkus Reviews (starred review)

“[A] lyrical blend of memoir and science . . . This soothes the soul.” —Publishers Weekly

“Deep listening found here. Connecting our collective soundscape with her own, Rosner reveals a spirit and depth of insight few have shown in this realm. Listen to just one of her paragraphs, and your future footfalls will never sound the same.” ––Edie Meidav, author of Another Love Discourse and Lola, California

“There is a world of knowledge of listening floating around us, in sound and on the page. No one has connected these stories to their own life and memories better than Elizabeth Rosner. I thought I knew this material after years of swimming in it, but she has revealed depths of sonic purpose through the unique connections she draws. This is a rare and profound book.” ––David Rothenberg, author of Whale Music and Secret Sounds of Ponds

“Elizabeth Rosner asks us to consider how listening can profoundly shape who we are, long before we really understand what it is we’ve heard.” —Bonnie Tsui, bestselling author of Why We Swim and American Chinatown

“Elizabeth Rosner’s Third Ear should be required for the entire human race. Rosner is one of the greatest writers and thinkers of our time—with insight into this century’s difficult socio-political and ethical questions. With clarity and intimacy, Rosner renders a sonic universe in which reciprocity connects all of life through deep listening. We are one small part of a large, delicate ecosystem—from the soil bioacoustics to the toxic pesticides we use, from the extinction of different species to the threat of our own. Third Ear gives us a second chance to look inside ourselves and find something human in us.” —E. J. Koh, author of The Liberators and The Magical Language of Others

“Third Ear: Reflections on the Art and Science of Listening is a marvel—a beautifully-written, meticulously researched, and fascinating exploration of the transformative power of listening. If you’re anything like me, your copy will be dog-eared and underlined, like all of your favorite books.” —Adrienne Brodeur, author of Wild Game

.

.

.

.

Click the link below for the article:

.

__________________________________________

August 23, 2024

Mohenjo

Business, Food For Thought, Human Interest, Political, Science, Technical

amazon, business, Business News, current-events, Future, Hotels, human-rights, medicine, mental-health, research, Science, Science News, technology, Technology News, travel, vacation

Click the link below the picture

.

It’s a rite of passage for men. You’re in your 40s, you’re at your annual checkup, and suddenly you hear the snap of a rubber glove. The doctor slathers on some lube and tells you to bend over. Boom—a finger right up your butthole.

The digital rectal exam, or DRE, has long been used to screen for signs of prostate cancer—the most common non-skin cancer in men, killer of over 30,000 a year. Most men understand that’s important. We may even know fathers or uncles or friends who’ve suffered from prostate cancer. But it’s still a little bit of a shock to be probed so intimately by a person you only see once a year, at most. The DRE is so infamous a procedure that it’s turned into a kind of folk knowledge, a proto-meme every guy hears about long before it happens to him. It’s the subject of uncomfortable jokes in the locker room, in the examination room, and in Hollywood. Who can forget M. Emmet Walsh lubing up before enthusiastically plugging Chevy Chase in Fletch?

But at my most recent physical, my longtime primary care physician did not seem to be prepping for the probe. I’m pushing 50. When I asked—a little hesitantly—she told me that she’s phased out the DRE for her patients in favor of a blood test that, while not foolproof, is less likely to result in false-positive results. And she’s not the only one. I soon learned that thanks to a wave of research on the benefits of blood screening and the drawbacks of the digital exam, the DRE is nearing extinction as a screening tool. While I doubt anyone, doctor or patient, will miss the DRE, the test had so much mythology associated with it that its quiet death felt a little shocking. The doctor’s not gonna stick a finger up my butt anymore? All that for nothing?

“Before we had a really good blood test, the rectal exam was really the only way we had to screen the prostate for cancer,” Adam Weiner, a urologic oncologist at Cedars-Sinai in Los Angeles, told me. In the exam, the physician inserts a finger in a patient’s rectum and presses against the prostate from the back. “You’re looking for nodularity—a bump that’s firmer than the area around it,” Weiner said.

The day med students learn the DRE has long been “a special day,” as Weiner put it: “Nobody misses it, as you can imagine.” Paid medical actors serve as subjects as the students practice the test—not only the actual prostate exam but the bedside manner that makes the exam easier, specifically “what you’re saying and how you’re positioning the patient.”

Because prostate cancer is such a threat, for many years, screening with the DRE was a standard part of every primary care physician’s job. Daniel Stone, a PCP in Los Angeles, recalled one of his med school instructors telling a classmate who’d expressed distaste at the idea of a DRE, “If you don’t do the rectal exam, you’re the asshole.”

After doing such exams their whole career, doctors assured me, they do not find DREs onerous or particularly gross. Sure, they don’t love them—mostly because they make patients so nervous—but they’re fine. “My patients will often say, ‘Oh, I feel sorry for you, having to do that exam,’ ” Stone said. He reminds them that he’s been performing DREs for 30 years: “It’s like looking in your ears or your mouth,” he tells them.

That jocular sympathy expressed by patients is illustrative: The digital rectal exam just plain makes men nervous. Many try to defuse their anxiety with jokes in the exam room. “Probably a third of the men who get the exam say ‘Oh, my favorite part,’ ” Stone said. “That’s almost routine.” My PCP told me she often had men jokingly (but also not jokingly) note the smallness of her fingers. Though the discomfort of a DRE pales in comparison to what basically any woman endures in a garden-variety OB-GYN appointment, many men have long viewed the exam as a barely bearable indignity. I certainly heard it described this way by wincing older relatives, often accompanied by casual homophobia or a feeble joke about prison rape. (“Doc, you ever serve time?” Fletch cracks.)

.

Illustration by Natalie Matthews-Ramo

Illustration by Natalie Matthews-Ramo

.

.

Click the link below for the article:

.

__________________________________________

August 22, 2024

Mohenjo

Business, Food For Thought, Human Interest, Political, Science, Technical

amazon, business, Business News, current-events, Future, Hotels, human-rights, medicine, mental-health, research, Science, Science News, technology, Technology News, travel, vacation

Click the link below the picture

.

Many traits that are expected of scientists—dispassion, detachment, prodigious attention to detail, putting caveats on everything, and always burying the lede—are less helpful in day-to-day life. The contrast between scientific and everyday conversation, for example, is one reason that so much scientific communication fails to hit the mark with broader audiences. (One observer put it bluntly: “Scientific writing is all too often … bad writing.”) One aspect of science, however, is a good model for our behavior, especially in times like these, when so many people seem to be sure that they are right and their opponents are wrong. It is the ability to say, “Wait—hold on. I might have been wrong.”

Not all scientists live up to this ideal, of course. But history offers admirable examples of scientists admitting they were wrong and changing their views in the face of new evidence and arguments. My favorite comes from the history of plate tectonics.

In the early 20th century German geophysicist and meteorologist Alfred Wegener proposed the theory of continental drift, suggesting that continents were not fixed on Earth’s surface but had migrated widely during the planet’s history. Wegener was not a crank: he was a prominent scientist who had made important contributions to meteorology and polar research. The idea that the now separate continents had once been somehow connected was supported by extensive evidence from stratigraphy and paleontology—evidence that had already inspired other theories of continental mobility. His proposal did not get ignored: it was discussed throughout Europe, North America, South Africa and Australia in the 1920s and early 1930s. But a majority of scientists rejected it, particularly in the U.S., where geologists objected to the form of the theory and geophysicists clung to a model of Earth that seemed to be incompatible with moving continents.

In the late 1950s and 1960s the debate was reopened as new evidence flooded in, especially from the ocean floor. By the mid-1960s some leading scientists—including Patrick M. S. Blackett of Imperial College London, Harry Hammond Hess of Princeton University, John Tuzo Wilson of the University of Toronto and Edward Bullard of the University of Cambridge—endorsed the idea of continental motions. Between 1967 and 1968 this revival began to coalesce as the theory of plate tectonics.

Not, however, at what was then known as the Lamont Geological Laboratory, part of Columbia University. Under the direction of geophysicist Maurice Ewing, Lamont was one of the world’s most respected centers of marine geophysical research in the 1950s and 1960s. With financial and logistical support from the U.S. Navy, Lamont researchers amassed prodigious amounts of data on the heat flow, seismicity, bathymetry and structure of the seafloor. But Lamont under Ewing was a bastion of resistance to the new theory.

It’s not clear why Ewing so strongly opposed continental drift. It may be that having trained in electrical engineering, physics and math, he never really warmed to geological questions. The evidence suggests that Ewing never engaged with Wegener’s work. In a grant proposal written in 1947, Ewing even confused “Wegener” with “Wagner,” referring to the “Wagner hypothesis of continental drift.”

And Ewing was not alone at Lamont in his ignorance of debates in geology. One scientist recalled that in 1965 he personally “was only vaguely aware of the hypothesis” [of continental drift] and that colleagues at Lamont who were familiar with it were mostly “skeptical and dismissive.” Ewing was also known to be autocratic; one oceanographer called him the “oceanographic equivalent of General Patton.” It wasn’t an environment that encouraged dissent.

One scientist who did change his mind was Xavier Le Pichon. In the spring of 1966 Le Pichon had just defended his Ph.D. thesis, which denied the possibility of regional crustal mobility. After seeing some key data at Lamont—data that had been presented at a meeting of the American Geophysical Union just that week—he went home and asked his wife to pour him a drink, saying, “The conclusions of my thesis are wrong.”

.

Scott Brundage

Scott Brundage

.

.

Click the link below for the article:

.

__________________________________________

Older Entries

Newer Entries

Asiya Hotaman/Getty Images

Asiya Hotaman/Getty Images

Source Photos: Artem Podrez/Pexels and Mackenzie Marco/Unsplash]

Source Photos: Artem Podrez/Pexels and Mackenzie Marco/Unsplash] Samantha Mash

Samantha Mash

Photo: Getty Images

Photo: Getty Images .

.

Scott Brundage

Scott Brundage